The Best Paper(s) of the WACCPD workshop are chosen based on extensive reviews from expert reviewers on the Program Committee. We rank the papers based on review scores and reviewers’ confidence. The best papers demonstrate novel ideas enabling new science, are written with clarity and thorough experimental analysis. Their publication is regarded as instrumental for the field. We also encourage papers that produce open source and reproducible software although that is not a specific qualifying criteria for the best paper award.

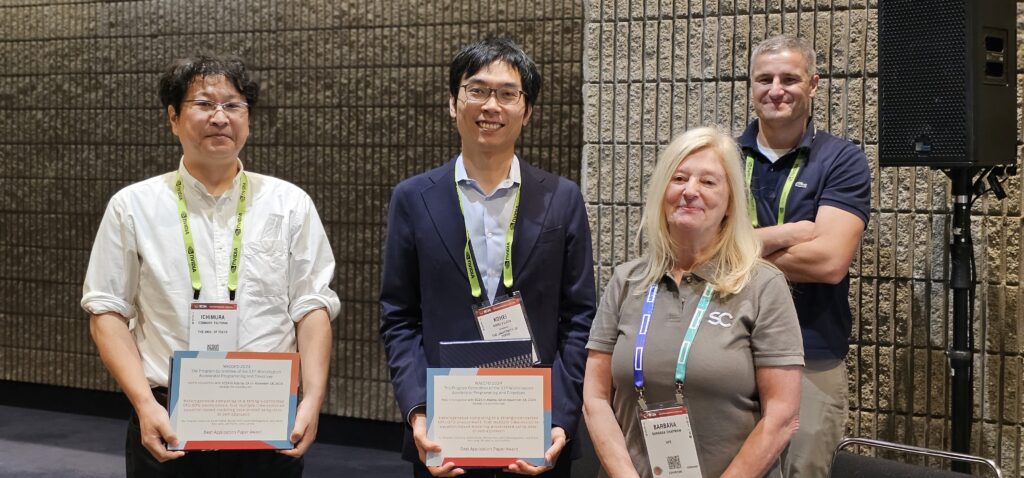

2024

The following two Best Paper Awards were awarded in 2024.

Best Tools Paper Award: ACC Saturator: Automatic Kernel Optimization for Directive-Based GPU Code

Authors: Kazuaki Matsumura, Simon Garcia De Gonzalo, Antonio J. Peña

Abstract

Automatic code optimization is a complex process that typically involves the application of multiple discrete algorithms that modify the program structure irreversibly. However, the design of these algorithms is often monolithic, and they require repetitive implementation to perform similar analyses due to the lack of cooperation. To address this issue, modern optimization techniques, such as equality saturation, allow for exhaustive term rewriting at various levels of inputs, thereby simplifying compiler design.

In this paper, we propose equality saturation to optimize sequential codes utilized in directive-based programming for GPUs. Our approach realizes less computation, less memory access, and high memory throughput simultaneously. Our fully-automated framework constructs single-assignment forms from inputs to be entirely rewritten while keeping dependencies and extracts optimal cases. Through practical benchmarks, we demonstrate a significant performance improvement on several compilers. Furthermore, we highlight the advantages of computational reordering and emphasize the significance of memory-access order for modern GPUs.

Best Application Paper Award: Heterogeneous computing in a strongly-connected CPU-GPU environment: fast multiple time-evolution equation-based modeling accelerated using data-driven approach

Authors: Tsuyoshi Ichimura, Kohei Fujita, Muneo Hori, Lalith Maddegedara, Jack Wells, Alan Gray, Ian Karlin, John Linford

Abstract

We propose a CPU-GPU heterogeneous computing method for solving time-evolution partial differential equation problems many times with guaranteed accuracy, in short time-to-solution and low energy-to-solution. On a single-GH200 node, the proposed method improved the computation speed by 86.4 and 8.67 times compared to the conventional method run only on CPU and only on GPU, respectively. Furthermore, the energy-to-solution was reduced by 32.2-fold (from 9944 J to 309 J) and 7.01-fold (from 2163 J to 309 J) when compared to using only the CPU and GPU, respectively. Using the proposed method on the Alps supercomputer, a 51.6-fold and 6.98-fold speedup was attained when compared to using only the CPU and GPU, respectively, and a high weak scaling efficiency of 94.3% was obtained up to 1,920 compute nodes. These implementations were realized using directive-based parallel programming models while enabling portability, indicating that directives are highly effective in analyses in heterogeneous computing environments.

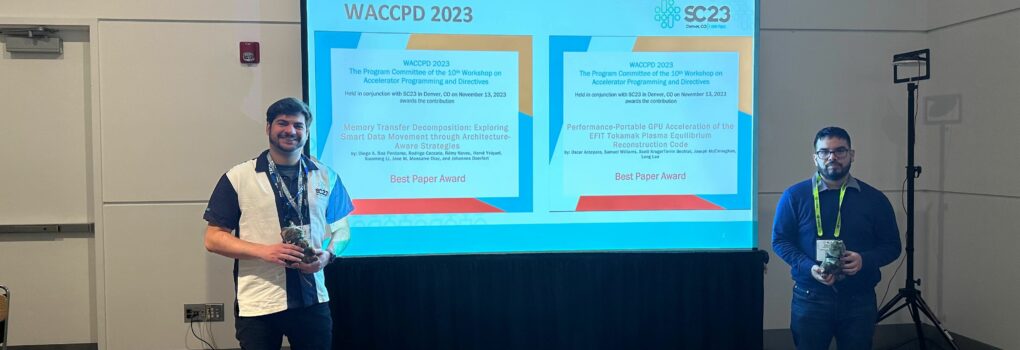

2023

The following two Best Paper Awards were awarded in 2023.

Performance-Portable GPU Acceleration of the EFIT Tokamak Plasma Equilibrium Reconstruction Code

Authors: Oscar Antepara (Lawrence Berkley National Laboratory), Samuel Williams (Lawrence Berkley National Laboratory), Scott Kruger (Tech-X Corporation), Torrin Bechtel (General Atomics), Joseph McClenaghan (General Atomics), Lang Lao (General Atomics)

Abstract

We present the steps followed to GPU-offload parts of the core solver of EFIT-AI, an equilibrium reconstruction code suitable for tokamak experiments and burning plasmas. For this work, we will focus on the fitting procedure that consists of a Grad–Shafranov (GS) equation inverse solver that calculates equilibrium reconstructions on a grid. We will show profiling results of the original code(CPU-baseline), as well as the directives used to GPU-offload the most time-consuming function, initially to compare OpenACC and OpenMP on NVIDIA and AMD GPUs and later on to assess OpenMP performance portability on NVIDIA, AMD and Intel GPUs. We will make a performance comparison for different grid sizes and show the speedup achieved on NVIDIA A100 (Perlmutter-NERSC), AMD MI250X (Frontier-OLCF) and Intel PVC GPUs (Sunspot-ALCF). Finally, we will draw some conclusions and recommendations to achieve high-performance portability for an equilibrium reconstruction code on the new HPC architectures.

Memory Transfer Decomposition: Exploring Smart Data Movement through Architecture-Aware Strategies

Authors: Diego A. Roa Perdomo (University of Delaware, Argonne National Laboratory), Rodrigo Ceccato (University of Campinas, Argonne National Laboratory), Rémy Neveu (University of Campinas, Argonne National Laboratory), Hervé Yviquel (University of Campinas), Xiaoming Li (University of Delaware), Jose M. Monsalve Diaz (Argonne National Laboratory), and Johannes Doerfert (Lawrence Livermore National Laboratory)

Abstract

We provide an automated framework that utilizes complex hardware links while preserving the simplified abstraction level for the user. Through the decomposition of user-issued memory operations into architecture-aware sub-tasks, we automatically exploit generally underused connections of the system. The operations we support include moving, distribution, and consolidation of memory across the node. For each of them, our Auto-Strategyzer framework proposes a task graph that transparently improves performance, in terms of latency or bandwidth, compared to naive strategies. For our evaluation, we integrated the Auto-Strategyzer as a C++ library into the LLVM-OpenMP runtime infrastructure. We demonstrate that some memory operations can be improved by a factor of 5x compared to naive versions. Integrated into LLVM/OpenMP, our Auto-Strategyzer accelerates cross-device memory movement by a factor of 1.9x, for large transfers, resulting in approx 6% end-to-end execution time decrease for a scientific proxy application.

2022

KokkACC: Enhancing Kokkos with OpenACC32

Authors: Pedro Valero-Lara (Oak Ridge National Laboratory (ORNL)), Seyong Lee (Oak Ridge National Laboratory (ORNL)), Marc Gonzalez-Tallada (Oak Ridge National Laboratory (ORNL)), Joel Denny (Oak Ridge National Laboratory (ORNL)), Jeffrey S. Vetter (Oak Ridge National Laboratory (ORNL)).

Abstract

Template metaprogramming is gaining popularity as a high-level solution for achieving performance portability on heterogeneous computing resources. Kokkos is a representative approach that offers programmers high-level abstractions for generic programming while most of the device-specific code generation and optimizations are delegated to the compiler through template specializations. For this, Kokkos provides a set of device-specific code specializations in multiple back ends, such as CUDA and HIP. Unlike CUDA or HIP, OpenACC is a high-level and directive-based programming model. This descriptive model allows developers to insert hints (pragmas) into their code that help the compiler to parallelize the code. The compiler is responsible for the transformation of the code, which is completely transparent to the programmer. This paper presents an OpenACC back end for Kokkos: KokkACC. As an alternative to Kokkos’s existing device-specific back ends, KokkACC is a multi-architecture back end providing a high-productivity programming environment enabled by OpenACC’s high-level and descriptive programming model. Moreover, we have observed competitive performance; in some cases, KokkACC is faster (up to 9×) than NVIDIA’s CUDA back end and much faster than OpenMP’s GPU offloading back end. This work also includes implementation details and a detailed performance study conducted with a set of mini-benchmarks (AXPY and DOT product) and three mini-apps (LULESH, miniFE and SNAP, a LAMMPS proxy mini-app).

2021

Can Fortran’s ‘do concurrent’ Replace Directives for Accelerated Computing?

Miko M. Stulajter is presenting this paper. Authers Miko M. Stulajter, Ronald M. Caplan, and Jon A. Linker (all from Predictive Science Inc., USA).

Abstract

Recently, there has been growing interest in using standard language constructs (e.g. C++’s Parallel Algorithms and Fortran’s do concurrent) for accelerated computing as an alternative to directive-based APIs (e.g. OpenMP and OpenACC). These constructs have the potential to be more portable, and some compilers already (or have plans to) support such standards. Here, we look at the current capabilities, portability, and performance of replacing directives with Fortran’s do concurrent using a mini-app that currently implements OpenACC for GPU-acceleration and OpenMP for multi-core CPU parallelism. We replace as many directives as possible with do concurrent, testing various configurations and compiler options within three major compilers: GNU’s gfortran, NVIDIA’s nvfortran, and Intel’s ifort. We find that with the right compiler versions and flags, many directives can be replaced without loss of performance or portability, and, in the case of nvfortran, they can all be replaced. We discuss limitations that may apply to more complicated codes and future language additions that may mitigate them. The software and Singularity/Apptainer containers are publicly provided to allow the results to be reproduced.

2019

Accelerating the Performance of Modal Aerosol Module of E3SM Using OpenACC

Zhengji Zhao

Zhengji Zhao is presenting this paper from Hongzhang Shan (Lawrence Berkeley National Laboratory), and Marcus Wagner (Cray Inc.).

Zhengji Zhao is an HPC consultant at the National Energy Research Scientific Computing Center (NERSC) at the Lawrence Berkeley National Laboratory. She specializes in supporting materials science and chemistry applications and users at NERSC. She was part of the NERSC7 (Edison, a Cray XC30) procurement, co-leading its implementation team. Additionally, she worked on developing or extending the capability of workloads analysis tools, such as the system performance monitoring with the NERSC SSP benchmarks, the library tracking (ALTD), and the application usage analysis automation. She is also a member of the NERSC application readiness team, helping users port their applications to new platforms. Most recently she has worked on bringing the checkpoint/restart capability to the NERSC workloads, and has also worked (co-PI) on the Berkeley Lab Directed Research and Development project that is designed to demonstrate performance potential of purpose-built architectures as potential future for HPC applications in absence of Moore’s Law. She has (co)athored more than 30 publications, including the work of developing the reduced density matrix (RDM) method for electronic structure calculations, a highly accurate alternative to wavefunction-based computational chemistry methods, and the award winning development work of the linear scaling 3D fragment (LS3DF) method for large-scale electronic structure calculations (best poster in SC07, and a Gordon Bell award in SC08). She served in the organizing committee for several HPC conference series, such as CUG, SC, IXPUG, etc. She received her Ph.D. in computational physics, and an M.S. in computer science from New York University.

Abstract

Using GPUs to accelerate the performance of HPC applications has recently gained great momentum. Energy Exascale Earth System Model (E3SM) is a state-of-the-science earth system model development and simulation project and has gained national recognition. It has a large code base with over a million lines of code. How to make effective use of GPUs remains a challenge. In this paper, we use the modal aerosol module (MAM) of E3SM as a driving example to investigate how to effectively offload computational tasks to GPUs, using the OpenACC directives. In particular, we are interested in the performance advantage of using GPUs and understanding the limiting factors from both the application characteristics and the GPU or OpenACC sides.

2018

OpenACC Based GPU Parallelization of Plane Sweep Algorithm for Geometric Intersection

OpenACC Based GPU Parallelization of Plane Sweep Algorithm for Geometric Intersection

Anmol Paudel

Anmol Paudel is presenting this paper by Anmol Paudel and Satish Puri (both from Marquette University, USA).

Anmol is currently pursing his graduate studies in the field of Computational Sciences. His research interests are mainly in the domain of parallel computing and high performance computing and his work as a RA in the Parallel Computing Lab in Marquette University is also geared towards the same. He devotes most of his time in speeding up algorithms and computational methods in scientific computing and data science in a scalable fashion. Besides work, he likes to hangout with his friends, travel and explore new cultures and cuisines.

Abstract

Line segment intersection is one of the elementary operations in computational geometry. Complex problems in Geographic Information Systems (GIS) like finding map overlays or spatial joins using polygonal data require solving segment intersections. Plane sweep paradigm is used for finding geometric intersection in an efficient manner. However, it is difficult to parallelize due to its in-order processing of spatial events. We present a new fine-grained parallel algorithm for geometric intersection and its CPU and GPU implementation using OpenMP and OpenACC. To the best of our knowledge, this is the first work demonstrating an effective parallelization of plane sweep on GPUs.

2017

This year featured two papers with perfect scores from all reviewers. We were please to give out two awards.

Implicit Low-Order Unstructured Finite-Element Multiple Simulation Enhanced by Dense Computation using OpenACC

Takuma Yamaguchi

Takuma Yamaguchi, Kohei Fujita are presenting this paper. Authors: Takuma Yamaguchi, Kohei Fujita, Tsuyoshi Ichimura, Muneo Hori, Maddegedara Lalith and Kengo Nakajima (all from the University of Tokyo, Japan).

Takuma Yamaguchi is a ph.D. student in the Department of Civil Engineering at the University of Tokyo and he has B.E. and M.E., from the University of Tokyo. His research is high-performance computing targeting at earthquake simulation. More specifically, his work performs fast crustal deformation computation for multiple computation enhanced by GPUs.

Kohei Fujita is an assistant professor at the Department of Civil Engineering at the University of Tokyo. He received his Dr. Eng. from the Department of Civil Engineering, University of Tokyo in 2014. His research interest is development of high-performance computing methods for earthquake engineering problems.

Kohei Fujita

He is a coauthor of SC14 and SC15 Gordon Bell Prize Finalist Papers on large-scale implicit unstructured finite-element earthquake simulations.

Abstract

In this paper, we develop a low-order three-dimensional finite-element solver for fast multiple-case crust deformation analysis on GPU-based systems. Based on a high-performance solver designed for massively parallel CPU based systems, we modify the algorithm to reduce random data access, and then insert OpenACC directives. The developed solver on ten Reedbush-Hnodes (20 P100 GPUs) attained speedup of 14.2 times from 20 K computer nodes, which is high considering the peak memory bandwidth ratio of 11.4 between the two systems. On the newest Volta generation V100 GPUs, the solver attained a further 2.45 times speedup from P100 GPUs. As a demonstrative example, we computed 368 cases of crustal deformation analyses of northeast Japan with 400 million degrees of freedom. The total procedure of algorithm modification and porting implementation took only two weeks; we can see that high performance improvement was achieved with low development cost. With the developed solver, we can expect improvement in reliability of crust-deformation analyses by many-case analyses on a wide range of GPU-based systems.

Automatic Testing of OpenACC Applications

Khalid Ahmad

Khalid Ahmad is presenting this paper. Authors: Khalid Ahmad (University of Utah, USA) and Michael Wolfe (NVIDIA Corporation, USA).

Khalid Ahmad is a PhD student in the School of Computing at the University of Utah and he has a M.S., from University of Utah. His current research focuses on enhancing programmer productivity and performance portability by implementing an auto-tuning framework that generates and evaluates different floating point precision variants of a numerical or a scientific application while maintaining data correctness.

Abstract

CAST (Compiler-Assisted Software Testing) is a feature in our compiler and runtime to help users automate testing high performance numerical programs. CAST normally works by running a known working version of a program and saving intermediate results to a reference file, then running a test version of a program and comparing the intermediate results against the reference file. Here, we describe the special case of using CAST on OpenACC programs running on a GPU. Instead of saving and comparing against a saved reference file, the compiler generates code to run each compute region on both the host CPU and the GPU. The values computed on the host and GPU are then compared, using OpenACC data directives and clauses to decide what data to compare.

The authors received beautiful plaques and all presenters were given a copy of the new OpenMP and OpenACC books.

- Best Paper #1

- Best Paper #2

- OpenACC Book given to all speakers

- OpenMP Book given to all speakers

2016

Acceleration of Element-by-Element Kernel in Unstructured Implicit Low-order Finite-element Earthquake Simulation using OpenACC on Pascal GPUs

Takuma Yamaguchi

Takuma Yamaguchi and Kohei Fujita are presenting this paper from Kohei Fujita, Takuma Yamaguchi, Tsuyoshi Ichimura (University of Tokyo, Japan), Muneo Hori (University of Tokyo and RIKEN, Japan) and Lalith Maddegedara (University of Tokyo, Japan).

Takuma Yamaguchi is a Master’s student in the Department of Civil Engineering at the University of Tokyo and he has a B.E., from the University of Tokyo. His research is high-performance computing targeting at earthquake simulation. More specifically, his work performs fast crustal deformation computation for multiple computation enhanced by GPUs.

Kohei Fujita is a postdoctoral researcher at Advanced Institute for Computational Science, RIKEN. He received his Dr. Eng. from the Department of Civil Engineering, University of Tokyo in 2014. His research interest is development of high-performance computing methods for earthquake engineering problems. He is a coauthor of SC14 and SC15 Gordon Bell Prize Finalist Papers on large-scale implicit unstructured finite-element earthquake simulations.

Kohei Fujita

Abstract

The element-by-element computation used in matrix-vector multiplications is the key kernel for attaining high-performance in unstructured implicit low-order finite-element earthquake simulations. We accelerate this CPU-based element-by-element kernel by developing suitable algorithms for GPUs and porting to a GPU-CPU heterogeneous compute environment by OpenACC. Other parts of the earthquake simulation code are ported by directly inserting OpenACC directives into the CPU code. This porting approach enables high performance with relatively low development costs. When comparing eight K computer nodes and eight NVIDIA Pascal P100 GPUs, we achieve 23.1 times speedup for the element-by-element kernel, which leads to 16.7 times speedup for the 3 x 3 block Jacobi preconditioned conjugate gradient finite-element solver. We show the effectiveness of the proposed method through many-case crust-deformation simulations on a GPU cluster.

The authors were awarded with an NVIDIA Pascal GPU.

2015

Acceleration of the FINE/Turbo CFD solver in a heterogeneous environment with OpenACC directives

Authors: David Gutzwiller (NUMECA USA, San Francisco, California), Ravi Srinivasan (Seattle Technology Center, Bellevue, Washington), Alain Demeulenaere NUMECA USA, San Francisco, California

Abstract

Adapting legacy applications for use in a modern heterogeneous environment is a serious challenge for an industrial software vendor (ISV). The adaptation of the NUMECA FINE/Turbo computational fluid dynamics (CFD) solver for accelerated CPU/GPU execution is presented. An incremental instrumentation with OpenACC directives has been used to obtain a global solver acceleration greater than 2X on the OLCF Titan supercomputer. The implementation principals and procedures presented in this paper constitute one successful path towards obtaining meaningful heterogeneous performance with a legacy application. The presented approach minimizes risk and developer-hour cost, making it particularly attractive for ISVs.

The authors were awarded with a Quadro M6000, 12GB.

2014

Achieving portability and performance through OpenACC

Authors: J. A. Herdman, W. P. Gaudin, O. Perks (High Performance Computing, AWE plc, Aldermaston, UK), D. A. Beckingsale, A. C. Mallinson, S. A. Jarvis (University of Warwick, UK)

Abstract

OpenACC is a directive-based programming model designed to allow easy access to emerging advanced architecture systems for existing production codes based on Fortran, C and C++. It also provides an approach to coding contemporary technologies without the need to learn complex vendor-specific languages, or understand the hardware at the deepest level. Portability and performance are the key features of this programming model, which are essential to productivity in real scientific applications.

OpenACC support is provided by a number of vendors and is defined by an open standard. However the standard is relatively new, and the implementations are relatively immature. This paper experimentally evaluates the currently available compilers by assessing two approaches to the OpenACC programming model: the “parallel” and “kernels” constructs. The implementation of both of these construct is compared, for each vendor, showing performance differences of up to 84%. Additionally, we observe performance differences of up to 13% between the best vendor implementations. OpenACC features which appear to cause performance issues in certain compilers are identified and linked to differing default vector length clauses between vendors. These studies are carried out over a range of hardware including GPU, APU, Xeon and Xeon Phi based architectures. Finally, OpenACC performance, and productivity, are compared against the alternative native programming approaches on each targeted platform, including CUDA, OpenCL, OpenMP 4.0 and Intel Offload, in addition to MPI and OpenMP.

The authors were awarded with a QUADRO P5000.